Transparency is pointless if no one is watching, but there’s no way a human reporter can keep up with the open data created by a modern city, let alone a country. Software agents, sometimes called bots, can monitor vast amounts of information and provide summaries, or alert reporters when something interesting appears. While there is huge potential for advanced techniques from artificial intelligence, there are also some useful bots that can be set up today, in minutes.

The most straightforward way to monitor open data is to present it in more useful ways. Narrative Science demonstrated its Quill automated story writing product by generating textual reports from sensors monitoring the health of Chicago’s beaches. These systems make open data more accessible and understandable by converting it from one form into another. While this is valuable, bots can also “read” and analyze open data, generating news by flagging items found in a larger data stream.

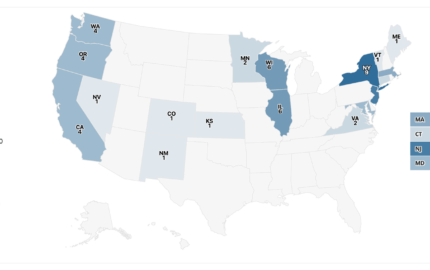

Software can look for the unusual. The Los Angeles Times crime map generates automated alerts when violent and/or property crime reports from the most recent week are up significantly over the average for a neighborhood. These alerts are displayed on the main crime map and the page for each neighborhood. There is a deep question here about the right numerical threshold for triggering an alert, or more generally what words like “anomaly” or “unusual” should mean in practice. Part of the work in creating a robot reporting tool is coming up with mathematical definitions for news-worthiness, something that journalists may not be used to doing.

For decades banks and others in the financial industry have employed much more sophisticated anomaly detection software to detect fraud and other threats. Generally this is done with proprietary software on proprietary data. But there’s a huge amount of public financial data that is not regularly monitored by journalists. The Securities and Exchange Commission’s EDGAR system publishes disclosure filings from all U.S. public companies, up to 12,000 reports per day during peak periods. This data fuels a cottage industry of advanced analytics tools for investors, which could also be used by reporters to monitor the activities of entire industries, beyond just the specific companies that a reporter already knows might be interesting. The rise of algorithmic and high-frequency trading suggests that journalists should also be engaging in detailed analysis of market data streams with an eye towards underhanded dealings, much as financial data provider Nanex does. In 2013, Nanex analyzed trading data and found evidence suggesting insider trading, because trades were executed faster than the information could have possibly been transmitted after the embargo ended. We cannot have algorithmic accountability without robots watching the algorithms; humans are just too slow.

This sort of work will require sophisticated new software. But there are simple bots available now to help journalists with their daily needs.

We can expect to see much more sophisticated bots make their way into journalism in the next few years

Sometimes you just want to know when somebody writes about a particular topic. The free Google Alerts service scans the Web for specific words or phrases and sends an e-mail when they appear. The names of people and organizations are an obvious query, but two prominent databases of police killings—Fatal Encounters and Killed By Police—use Google Alerts to find relevant incidents in local news reports.

Other simple bots watch for changes in a web page. This can be used to detect deleted content, track shifts in language as a politician or company responds to scrutiny, or to create a record of who said what when. There are myriad tools for change detection, from free sites like Follow That Page to more professional services like Versionista. There is a whole subgenre of bots that monitor Wikipedia, such as @congressedits which tweets when someone edits a page anonymously from an IP address belonging to the U.S. Congress.

We can expect to see much more sophisticated bots make their way into journalism in the next few years. IBM’s Watson technology is best known for defeating “Jeopardy!” champions at their own game in February 2011. But the ability to answer general questions by instantly consulting a large body of reference material has applications far beyond game shows, and IBM has since invested more than a billion dollars in a new division devoted to cognitive services. One of the first big applications is health care. IBM has collaborated with Memorial Sloan Kettering Cancer Center, where the system aims to assist doctors in diagnosing complex cancers by comparing a patient’s case to huge databases of medical research.

Related Article

Celeste LeCompte on automation in the newsroom

This kind of artificial intelligence technology will be developed first for fields such as law, finance, and intelligence where there are large business opportunities. But consider what it could do for journalists. Imagine telling a newsroom AI to watch campaign finance disclosures, SEC filings, and media reports for suspicious business deals that could signal undue political influence. The goal is a system powerful enough to scrutinize every available open data feed, understand what each data point means in context by comparing it to databases of background knowledge and current events, and alert reporters when something looks fishy or interesting.

This dream is a ways off, but the necessary technology is under active development within powerful industries. If this technology can be imported into journalism it may change the field in significant ways. The definition of news will shift toward things that can be expressed to a computer, something which seems a little inhuman but could end up being more transparent and systematic than the tenets of “news judgment.” As a result of this systematization, journalists and their readers will expect to be everywhere, monitoring every government department and every multinational corporation, not just the ones that have caught our attention recently.