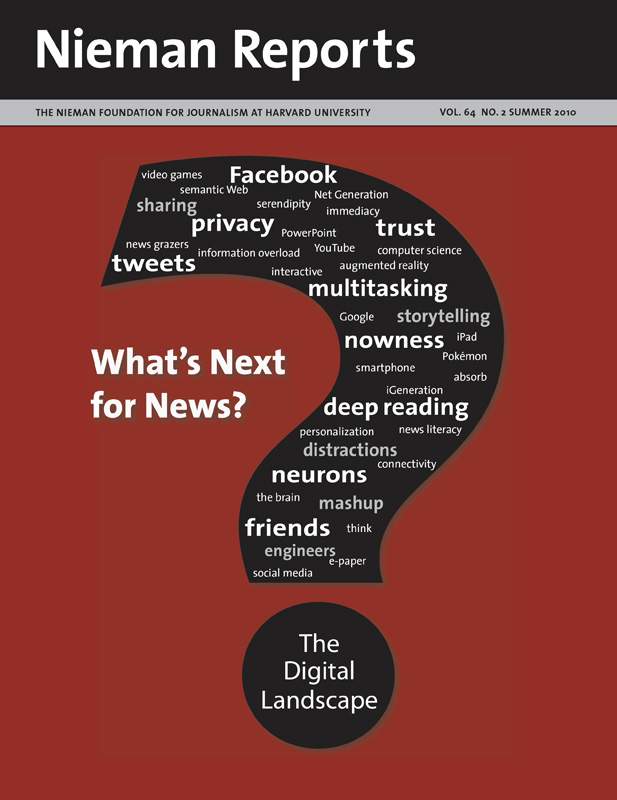

The Digital Landscape: What's Next for News?

Explore the emerging realms of digital territory where news and information reside—or will soon. It’s a place where game playing thrives and augmented reality tugs at possibilities. It’s where video excels, while the appetite for long-form text and the experience of “deep reading” is diminished, and it’s where the allure of multitasking greets the crush of information. Learn how young people negotiate their journey, and travel inside the brain to discover its capacities in the digital realm. Dig deeper into topics covered in the magazine by clicking on the books in our digital library to reveal selected videos, articles, blogs and Web sites.

In the movie “Terminator,” humanity started down the path to destruction when a supercomputer called Skynet started to become smarter on its own. I was reminded of that possibility during my research about the semantic Web.

RELATED ARTICLE

"For a Start-up, Machine-Generated Stories Are the Name of the Game"

- Jan GardnerNever heard of the semantic Web? I don’t blame you. Much of it is still in the lab, the plaything of academics and computer scientists. To hear some of them debate it, the semantic Web will evolve, like Skynet, into an all powerful thing that can help us understand our world or create various crises when it starts to develop a form of connected intelligence.

Intrigued? I was. Particularly when I asked computer scientists about how this concept could change journalism in the next five years. The true believers say the semantic Web could help journalists report complex ever-changing stories and reach new audiences. The critics doubt the semantic Web will be anything but a high-tech fantasy. But even some of the doubters are willing to speculate that computers using pieces of the semantic Web will increasingly report much of the news in the not too distant future.

What Is the Semantic Web?

It doesn’t help that the semantic Web is such a wide-ranging concept that even advocates can’t quite agree on what it is; that’s not surprising, given that semantics has to do with the very meaning of words and symbols. The most general definition I can offer is that the semantic Web speaks to how we are moving from a Web of documents to a Web of linked data.

One might say documents are data and that would be correct. The challenge is that the World Wide Web was designed for humans, not machines. The Internet is like a digital filing cabinet overflowing with all sorts of documents, videos and pictures that people can consume but machines can’t read or understand. This means that an avalanche of facts and figures, photographs and so on is simply inaccessible or cannot be organized quickly and effectively, particularly if information is constantly changing.

You might think that Google algorithms do a fine job of finding information and organizing the Web. Yet the vision of semantic Web advocates is so big that it dwarfs Google in complexity and reach and starts to sound like a science fiction movie. These semantic Web visionaries would like humans to work with machines to make the data we create more easily accessible and analyzed. This is the fundamental difference between linked documents (what Google does) and linked data. Tim Berners-Lee, who invented the World Wide Web, says the semantic Web will give information “… a well-defined meaning, better enabling computers and people to work in cooperation.”

The ways this machine-friendly data can be created vary in complexity and just as you may not know exactly how your computer creates documents and stores them on your hard drive, you may never need to enter the world that uses words like “taxonomy” and its close relation “ontology” or new tech terms like RDF (Resource Description Framework) and SPARQL. Let’s just say that the first two speak to how we can organize and find meaning in statements inside documents and the second set has to do with the language of machines, how we can express the relationship between different data, and how we can request structured data that has been optimized for use by machines. These concepts are being slowly incorporated into the Web and will, so the advocates say, give the Web the smarts it lacks.

If machines can look at all documents and pull out the who, what, when and where (and someday how and why) so other machines can understand them in a standardized way, then all sorts of interesting opportunities arise for how that information can be found and used. Names and places and ideas and even emotions expressed in stories become much more than just words in one story; they become the way that all of the information in many documents can be linked and layered together to create new documents and stories.

Does it sound like a super sophisticated mashup, where data from different sources such as police crime reports and maps are combined to show exactly where arrests have occurred? Yes, but police reports are simply the very tip of the huge amount of data generated every day that might be of interest to your readers or viewers if only you could get your hands on it and process it in a meaningful way. Reports on the number of flu cases, level of foreclosures, employment statistics, and home prices, if they followed semantic Web standards, could accurately and objectively (and automatically) reflect the constantly changing health of the neighborhoods in your community.

Some efforts are giving us a look into the future with sophisticated computers that can find data, see how they fit with other data, and answer queries with a previously unattainable level of accuracy and relevance. You might have heard of the WolframAlpha search engine unveiled last year. The goal is to generate better answers using organized data. The trouble is that while WolframAlpha is very smart answering some database-related queries, its limited supply of data sets means that on many common questions it is quite dumb.

The semantic Web hopes to address this by asking everyone to help standardize data descriptions so that the Web becomes a giant shared database. There are several government efforts in the United States and the United Kingdom (including one headed by Berners-Lee) that will open up government data for anyone to use as they see fit. This effort will unlock a treasure trove that journalists can analyze with the potential to inspire thousands of new stories. Reporters familiar with the semantic Web concept will soon use automated research tools that identify patterns, local connections, and even conflicts of interest without having to painfully acquire and load piles of data into spreadsheets.

But if semantic Web tools become robot helpers for journalists, could the technology become so smart it will replace some reporters? Yes and no. Even advocates of the semantic Web say machines will not be able tell complex stories with nuances and context or convey emotion or insights any time soon. However, there is a range of stories that could be told by computer programs pulling data from various sources. Imagine if data from wedding licenses could be combined with the educational and birth certificates of the happy couple along with weather statistics and their bridal registry or Facebook page. A computer could then write a typical wedding announcement:

Right now it is difficult to do this, although Facebook is encouraging people to consider such concepts with their recent announcements about linking data. The more data that are made public and computer friendly, the more viable this idea becomes. To return to our example, if someone buys Mary and John their toaster, the semantic Web and use of linked data would allow our computer-generated story to automatically update to include their continued need for a fondue set and their new address in San Francisco.

The Threat to Some News

After talking to dozens of computer scientists, I am concerned that a significant amount of what passes for news—why people pick up the paper or watch a newscast—can be produced by machines that pull together data from a variety of sources and organize—even personalize—it for the consumer. Certainly sports information (including video of the winning home run) and weather and financial news will often be presented to our readers and viewers by semantic Web-related software programs that can assimilate data directly from the source and instantly present it to consumers, no newspaper editor or TV producer needed. Doubt this possibility? If you search Google for a city’s weather, it will give you the answer without sending you to a weather-related page on a news site.

One of the original grand visions for the semantic Web was that by standardizing information so computers can read it, machines could start to be our personal agents, anticipating our needs, and seeking out information on our behalf, helping to plan the perfect trip to Paris based on our past preferences (a love of art or fashion, for example, would be combined with the latest data on art shows and stores offering sales during your vacation) or to pull in data to solve problems (recommending the quickest way to work given the weather, traffic and planned road work). Imagine your local traffic reporters replaced by the science fiction vision of your own version of HAL 9000 who might not open the garage doors if the conditions outside are unsafe to drive in.

The good news for local radio station traffic reporters is that the Web is messy and filled with contradictory and unclear information that still needs human interpretation. Semantic Web advocates know the Internet will remain confusing for machines because we humans keep changing it in unexpected ways, not to mention our habit of inventing new words and meaning. If our use of poor grammar and slang is not enough, the development of the semantic Web faces another challenge. Many people, particularly those who would have to pay to have their business apply semantic Web concepts, do not see the immediate benefit of such an effort. Even if major corporations embrace it, given that the Web is the result of millions of minds each with their own standards and goals, getting everyone to agree and then act on one way to describe data is going to happen only when there are compelling economic reasons or it becomes so easy to do that there is no reason not to.

A number of news organizations, however, are already betting that making their stories more machine friendly will pay off. The New York Times, the BBC, and Thomson Reuters have embraced various facets of the semantic Web. Thomson Reuters is making a separate business of it by offering a way to tag stories, extracting information such as places and names so they can be more easily found and linked to other data. Applying semantic Web ideas to a news archive should, in theory, allow that data to be used and reused by the news organization and the world at large, reason enough for The New York Times to invest in just such an effort.

This enhanced findability is the most practical and immediate reason for journalists to pay attention to the semantic Web movement. When we stop writing clever headlines for the Web (you might have been told puns are for humans and mean nothing to computers) and instead write headlines with full names, places and descriptions of action, we are in effect tagging content for the machines, which in turn should help more people find our reporting. If you use Delicious or tag photos on Flickr, you are likewise doing the work of the semantic Web by making content computer friendly.

Looking Ahead

This move toward semantic publishing will, I forecast, over the next five years encourage the linking together of many kinds of Web content. Data will be easily gathered and Web users will reuse the information and interpret it in their own ways. Such a world will challenge our notions of copyright law, fair use, and privacy as data start to flow without attribution or even verification. You can speculate that someday in the next 10 years machines combining parts of stories will commit libel by omitting critical context from data they have gathered and presented.

Andrew Finlayson was a 2009-2010 John S. Knight journalism fellow at Stanford University where he studied the semantic Web, video streaming, social media, and mobile technologies. He is a former director of online content for the Fox Television Stations and author of “Questions That Work” a book about succeeding in work by asking questions that has been translated into four languages.

RELATED ARTICLE

"For a Start-up, Machine-Generated Stories Are the Name of the Game"

- Jan GardnerNever heard of the semantic Web? I don’t blame you. Much of it is still in the lab, the plaything of academics and computer scientists. To hear some of them debate it, the semantic Web will evolve, like Skynet, into an all powerful thing that can help us understand our world or create various crises when it starts to develop a form of connected intelligence.

Intrigued? I was. Particularly when I asked computer scientists about how this concept could change journalism in the next five years. The true believers say the semantic Web could help journalists report complex ever-changing stories and reach new audiences. The critics doubt the semantic Web will be anything but a high-tech fantasy. But even some of the doubters are willing to speculate that computers using pieces of the semantic Web will increasingly report much of the news in the not too distant future.

What Is the Semantic Web?

It doesn’t help that the semantic Web is such a wide-ranging concept that even advocates can’t quite agree on what it is; that’s not surprising, given that semantics has to do with the very meaning of words and symbols. The most general definition I can offer is that the semantic Web speaks to how we are moving from a Web of documents to a Web of linked data.

One might say documents are data and that would be correct. The challenge is that the World Wide Web was designed for humans, not machines. The Internet is like a digital filing cabinet overflowing with all sorts of documents, videos and pictures that people can consume but machines can’t read or understand. This means that an avalanche of facts and figures, photographs and so on is simply inaccessible or cannot be organized quickly and effectively, particularly if information is constantly changing.

You might think that Google algorithms do a fine job of finding information and organizing the Web. Yet the vision of semantic Web advocates is so big that it dwarfs Google in complexity and reach and starts to sound like a science fiction movie. These semantic Web visionaries would like humans to work with machines to make the data we create more easily accessible and analyzed. This is the fundamental difference between linked documents (what Google does) and linked data. Tim Berners-Lee, who invented the World Wide Web, says the semantic Web will give information “… a well-defined meaning, better enabling computers and people to work in cooperation.”

The ways this machine-friendly data can be created vary in complexity and just as you may not know exactly how your computer creates documents and stores them on your hard drive, you may never need to enter the world that uses words like “taxonomy” and its close relation “ontology” or new tech terms like RDF (Resource Description Framework) and SPARQL. Let’s just say that the first two speak to how we can organize and find meaning in statements inside documents and the second set has to do with the language of machines, how we can express the relationship between different data, and how we can request structured data that has been optimized for use by machines. These concepts are being slowly incorporated into the Web and will, so the advocates say, give the Web the smarts it lacks.

If machines can look at all documents and pull out the who, what, when and where (and someday how and why) so other machines can understand them in a standardized way, then all sorts of interesting opportunities arise for how that information can be found and used. Names and places and ideas and even emotions expressed in stories become much more than just words in one story; they become the way that all of the information in many documents can be linked and layered together to create new documents and stories.

Does it sound like a super sophisticated mashup, where data from different sources such as police crime reports and maps are combined to show exactly where arrests have occurred? Yes, but police reports are simply the very tip of the huge amount of data generated every day that might be of interest to your readers or viewers if only you could get your hands on it and process it in a meaningful way. Reports on the number of flu cases, level of foreclosures, employment statistics, and home prices, if they followed semantic Web standards, could accurately and objectively (and automatically) reflect the constantly changing health of the neighborhoods in your community.

Some efforts are giving us a look into the future with sophisticated computers that can find data, see how they fit with other data, and answer queries with a previously unattainable level of accuracy and relevance. You might have heard of the WolframAlpha search engine unveiled last year. The goal is to generate better answers using organized data. The trouble is that while WolframAlpha is very smart answering some database-related queries, its limited supply of data sets means that on many common questions it is quite dumb.

The semantic Web hopes to address this by asking everyone to help standardize data descriptions so that the Web becomes a giant shared database. There are several government efforts in the United States and the United Kingdom (including one headed by Berners-Lee) that will open up government data for anyone to use as they see fit. This effort will unlock a treasure trove that journalists can analyze with the potential to inspire thousands of new stories. Reporters familiar with the semantic Web concept will soon use automated research tools that identify patterns, local connections, and even conflicts of interest without having to painfully acquire and load piles of data into spreadsheets.

But if semantic Web tools become robot helpers for journalists, could the technology become so smart it will replace some reporters? Yes and no. Even advocates of the semantic Web say machines will not be able tell complex stories with nuances and context or convey emotion or insights any time soon. However, there is a range of stories that could be told by computer programs pulling data from various sources. Imagine if data from wedding licenses could be combined with the educational and birth certificates of the happy couple along with weather statistics and their bridal registry or Facebook page. A computer could then write a typical wedding announcement:

Mary Smith, 23, a recent journalism graduate of Stanford University born in Los Angeles, and John Doe, 25, a graduate student at the University of California at Berkeley Graduate School of Journalism born in New York City, were married at St. Mary’s Cathedral in San Francisco on Tuesday, April 20, 2010 under sunny skies. They are registered at Crate & Barrel where they are still hoping for a new toaster. Their Facebook profile says they honeymooned at Disneyland where they shared these photos.

Right now it is difficult to do this, although Facebook is encouraging people to consider such concepts with their recent announcements about linking data. The more data that are made public and computer friendly, the more viable this idea becomes. To return to our example, if someone buys Mary and John their toaster, the semantic Web and use of linked data would allow our computer-generated story to automatically update to include their continued need for a fondue set and their new address in San Francisco.

The Threat to Some News

After talking to dozens of computer scientists, I am concerned that a significant amount of what passes for news—why people pick up the paper or watch a newscast—can be produced by machines that pull together data from a variety of sources and organize—even personalize—it for the consumer. Certainly sports information (including video of the winning home run) and weather and financial news will often be presented to our readers and viewers by semantic Web-related software programs that can assimilate data directly from the source and instantly present it to consumers, no newspaper editor or TV producer needed. Doubt this possibility? If you search Google for a city’s weather, it will give you the answer without sending you to a weather-related page on a news site.

One of the original grand visions for the semantic Web was that by standardizing information so computers can read it, machines could start to be our personal agents, anticipating our needs, and seeking out information on our behalf, helping to plan the perfect trip to Paris based on our past preferences (a love of art or fashion, for example, would be combined with the latest data on art shows and stores offering sales during your vacation) or to pull in data to solve problems (recommending the quickest way to work given the weather, traffic and planned road work). Imagine your local traffic reporters replaced by the science fiction vision of your own version of HAL 9000 who might not open the garage doors if the conditions outside are unsafe to drive in.

The good news for local radio station traffic reporters is that the Web is messy and filled with contradictory and unclear information that still needs human interpretation. Semantic Web advocates know the Internet will remain confusing for machines because we humans keep changing it in unexpected ways, not to mention our habit of inventing new words and meaning. If our use of poor grammar and slang is not enough, the development of the semantic Web faces another challenge. Many people, particularly those who would have to pay to have their business apply semantic Web concepts, do not see the immediate benefit of such an effort. Even if major corporations embrace it, given that the Web is the result of millions of minds each with their own standards and goals, getting everyone to agree and then act on one way to describe data is going to happen only when there are compelling economic reasons or it becomes so easy to do that there is no reason not to.

A number of news organizations, however, are already betting that making their stories more machine friendly will pay off. The New York Times, the BBC, and Thomson Reuters have embraced various facets of the semantic Web. Thomson Reuters is making a separate business of it by offering a way to tag stories, extracting information such as places and names so they can be more easily found and linked to other data. Applying semantic Web ideas to a news archive should, in theory, allow that data to be used and reused by the news organization and the world at large, reason enough for The New York Times to invest in just such an effort.

This enhanced findability is the most practical and immediate reason for journalists to pay attention to the semantic Web movement. When we stop writing clever headlines for the Web (you might have been told puns are for humans and mean nothing to computers) and instead write headlines with full names, places and descriptions of action, we are in effect tagging content for the machines, which in turn should help more people find our reporting. If you use Delicious or tag photos on Flickr, you are likewise doing the work of the semantic Web by making content computer friendly.

Looking Ahead

This move toward semantic publishing will, I forecast, over the next five years encourage the linking together of many kinds of Web content. Data will be easily gathered and Web users will reuse the information and interpret it in their own ways. Such a world will challenge our notions of copyright law, fair use, and privacy as data start to flow without attribution or even verification. You can speculate that someday in the next 10 years machines combining parts of stories will commit libel by omitting critical context from data they have gathered and presented.

Andrew Finlayson was a 2009-2010 John S. Knight journalism fellow at Stanford University where he studied the semantic Web, video streaming, social media, and mobile technologies. He is a former director of online content for the Fox Television Stations and author of “Questions That Work” a book about succeeding in work by asking questions that has been translated into four languages.