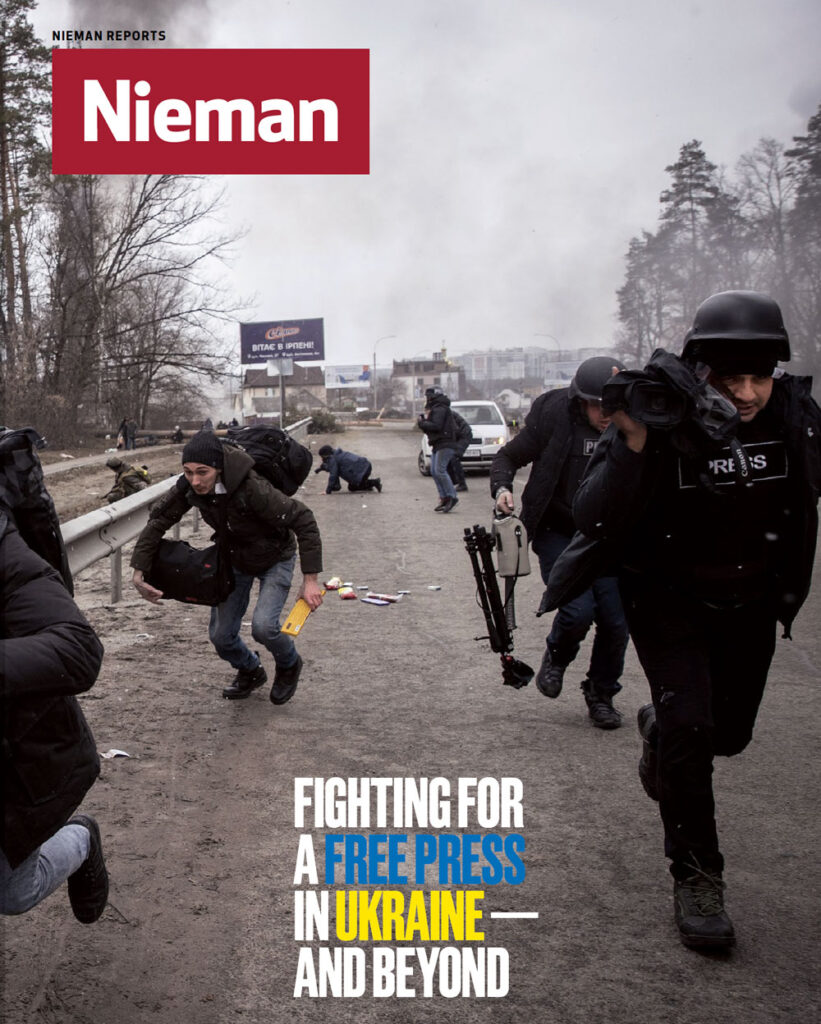

In recent years, journalism in Ukraine and other post-Soviet states has been in flux, with editorial independence reliant on foreign entities, funding in constant deficit, and press freedoms wavering. Now, as Russia’s war in Ukraine continues to drag on, the work of journalists in the country has become more urgent — and dangerous — than ever.

As civilian deaths mount, journalists, too, have lost their lives reporting on the war’s horrors. Nieman Reports takes a look at how Ukrainian journalists are reporting on the war in their home, the costs of reporting accurately on the invasion, and the growing threats to press freedom under Putin.

It was a fine March morning 16 years ago and the phones in my office at ABC News were ringing hard. The Associated Press had moved a story on a poll by the American Medical Association with an undeniably sexy topic: specifically, spring break sex.

Nightline was interested. Radio was raring to go. Just one thing was missing: a green light from the Polling Unit.

You can find both beauty and the uglies in this little episode, one of hundreds like it in my tenure as ABC’s director of polling. This nugget may stand out in memory simply because an organization as respected as the AMA ginned up a survey that, on inspection, was pure nonsense. It gives the lie to the notion that a seemingly reputable source is all we need.

The sad part is that, all these years later, the issue’s the same: Problematic surveys clog our inboxes and fill our column inches every bit as much now as then. And the need for vigilance — for polling standards — is as urgent as ever.

The redemption: We were empowered to shoot it down. With the support of management, we’d developed carefully thought-out poll reporting policies that included such requirements as full disclosure, probability sampling, neutral questions, and honest analysis. And we had the authority to implement them.

More soon about standards, but let’s look first at the offending AMA poll: “SEX AND INTOXICATION MORE COMMON ON SPRING BREAK, ACCORDING TO POLL OF FEMALE COLLEGE STUDENTS AND GRADUATES,” the association’s pitch shouted. “As college students depart for alcohol-filled spring breaks, the American Medical Association (AMA) releases new polling data and b-roll about the dangers of high-risk drinking during this college tradition, especially for women.”

Unless you missed the drift, the supporting B-roll video, per the AMA’s release, featured “spring break party images” and “college females drinking.”

Every newsroom needs these kinds of guideposts. To this day, too few have them.

Every newsroom needs these kinds of guideposts. To this day, too few have them

The AP’s reporting on the poll was breathless. “All but confirming what goes on in those ‘Girls Gone Wild’ videos, 83 percent of college women and graduates surveyed by the AMA said spring break involves heavier-than-usual drinking, and 74 percent said the trips result in increased sexual activity,” read its coverage. “Sizable numbers reported getting sick from drinking, and blacking out and engaging in unprotected sex or sex with more than one partner, activities that increase their risks for sexually transmitted diseases and unwanted pregnancies.”

For us, this party ended early because we applied our standards. A quick review found that the AMA poll wasn’t based on a representative, random sample of respondents; it was conducted among people who’d signed themselves up to click through questionnaires online for payment. Among the college women who participated, 71 percent had never taken a spring break trip; they were asked what they’d heard about others who’d done so — that is, rank hearsay. And the “sizable number” who reported engaging in unprotected spring break sex (as it was asked, they could have answered yes because they were with a committed partner) or undefined sexual activity with more than one partner was… 4%.

Don’t get me wrong: Irresponsible, risky behavior is a public health concern. But serious problems demand serious data. And this was far from reliable data — the imprimatur of the American Medical Association and The Associated Press notwithstanding.

I’ll admit to a little grim satisfaction when, almost three months after we took a bye on this piece of work, the AP issued a “corrective,” in its parlance, withdrawing its story.

Much more satisfaction came from the good side of this go-round: The simple fact that we could keep bad data off the air.

On a hype job around spring break sex, maybe this is not such a big deal. But the bigger picture cuts to the fundamental pact between news organizations and their audiences: the promise that we check out what we’re reporting, because that’s our job. We don’t just pass unverified assertions from point A to B; social media can handle that quite well, thank you. We add value along the way, through the simple act of employing our reportorial skills to establish, to the best of our ability, that the information we’re reporting is true, meaningful, and worthy of our audience’s attention. Break this deal and we may as well go sell fish.

Most news we cover does get the scrutiny it deserves. Polls, sadly, get a bye. They’re often compelling – perhaps too much so. They’re authoritative, or seem that way because they are presented with supposed mathematical certainty. They add structure to news reports that often otherwise would be based on mere anecdote and assumption.

And checking them out seems complicated, especially in understaffed newsrooms full of undertrained English majors. The plain reality is that the news media for far too long have indulged themselves in the lazy luxury of being both data hungry and math phobic. We work up a story, grab the nearest data point that seems to support our premise, slap it in, and move on — without the due diligence we bring to every other element of the reporting profession.

The stakes are serious. Bad data aren’t just funny numbers. Manufactured surveys traffic in misinformation, even downright disinformation. Often, they’re cranked up to support someone’s product, agenda, or point of view. Other times they’re the product of plain old poor practice. They come at us from all corners — corporate America and its PR agents, political players, interest and advocacy groups, and academia alike. Good data invaluably inform well-reasoned decisions. Bad data do precisely the opposite. And the difference is knowable.

Standards are the antidote. They start at the same place: disclosure. Thinking about reporting a survey? Get a detailed description of the methodology (not just a vague claim of representativeness), the full questionnaire, and the overall results to each question. If you don’t get them, it’s a no-go, full stop.

With disclosure in hand, we can see whether the sample meets reasonable standards, the questions are unbiased, and the analysis stays true to the results. We may have more questions, but this is where we dig in first, because it’s where polls go wrong: through unreliable sampling, leading and biased questions, and cherry-picked analysis.

The more news organizations do to inform themselves about the particulars of public opinion polling, the better positioned they’ll be to report election polls with appropriate caution, care, and insight

We may be primed to look for junk data from outfits with an interest in the outcome, but that approach lacks nuance. Some advocacy groups care enough about their issues to investigate them with unbiased research. Conversely, I see as many utterly compromised surveys produced under the imprimatur of major academic institutions as from any other source, and as many junk studies published by leading news organizations as anywhere else.

Holding the fort is no small thing. Every time a news organization denies itself a story, it’s putting itself at a competitive disadvantage, especially in these days of click-counting. I argue that the alternative, having and holding standards, provides something of higher value: an integrity advantage. While admittedly that’s tougher to quantify, I still hold that it’s the one that matters.

Polling discussions inevitably turn to pre-election polls; they account for a small sliver of the survey research enterprise but win outsize attention. Just as with other types of polling, suffice it to say that the more news organizations do to inform themselves about the particulars of public opinion polling, the better positioned they’ll be to report election polls — like all others — with appropriate caution, care, and insight.

You’ve noticed by now that I’ve held back on one element: precisely what polling standards should be. I’m devoted to probability-based samples — the principles of inferential statistics demand them — and highly dubious of convenience sampling, including opt-in internet polls. The American Association for Public Opinion Research reported back in 2010 that it’s wrong to claim that results of opt-in online panels are representative of any broader population. That report stands today, supported by the preponderance of the literature on the subject.

But survey methods aren’t a religion, and even researchers themselves won’t all agree on what constitutes valid, reliable, and responsible survey research, from sampling to questions to analysis. So rather than presuming to dictate standards, I take another tack: Have some.

Assign someone in the newsroom to investigate and understand survey methods. Look at polling standards that other organizations have developed. Work up your own standards, announce them, enforce them, be ready and willing to explain and defend them.

Because it matters. News organizations cover what people do, because it’s important; through polls, we cover what people think, because it’s important, too. Reliable, independent public opinion research chases away spin, speculation, and punditry. It informs our judgment in unique and irreplaceable ways, on virtually every issue we cover. In this, as in all else, we are called upon — quite simply — to get it right.

Gary Langer is president of Langer Research Associates, former director of polling at ABC News and a former reporter at The Associated Press.